The Validation Lens

A Discipline for Learning Under Uncertainty

Part of the Adaptive Fitness System (AFS) series. This post unpacks Level 1, Building Block 3: the AFS Validation Lens.

My grandfather had a saying: “Before you dig, know where you’re going to put the dirt.”

He was talking about gardening. But I’ve spent twenty years watching organizations skip that exact question before launching change programs worth millions of euros. They dig enthusiastically. They dig confidently. They dig in formation, with frameworks and governance boards and steering committees. And then, somewhere around month fourteen, they look up and realize they have nowhere to put the dirt.

That’s not a planning failure. That’s a validation failure. Nobody asked the question early enough.

Where we are

We’ve been building the Adaptive Fitness System piece by piece.

Post 27 introduced the full architecture — two levels, building blocks on the bottom, capability domains on top.

Post 29 gave you the Four Questions as a recurring fitness check, and the Fitness Dial as a way to calibrate how hard you’re pushing any dimension at a given moment.

Post 30 introduced the AFS Compass — four pulls that are always in tension: Value to the North, Learning to the East, Stability to the South, Control to the West. The Compass doesn’t tell you where to go. It tells you which direction you’re being pulled, and whether that pull is appropriate.

Today we’re adding Building Block 3: the Validation Lens.

It’s the one that, honestly, I wish I’d had a name for fifteen years ago.

The pattern that made me realize we needed this

I’ve watched more than a few transformation programs follow the same arc:

Big announcement. Ambitious roadmap. Careful planning. Phased rollout. Post-implementation review at month eighteen. Findings: adoption was lower than expected, the original assumptions didn’t hold, the context shifted during execution, and the outcomes we were hoping for didn’t materialize quite the way we’d imagined.

Recommendations: adjust the roadmap. Do more communication. Try harder next year.

The problem isn’t the execution. The problem is that nobody built in a mechanism for learning while moving. Everything was designed to execute — not to discover. The plan was treated as a conclusion, not a hypothesis. And by the time the evidence arrived, sunk costs had done their work on everyone’s judgment.

This is what I mean by validating too late. It’s not incompetence. It’s an organizational default — one that’s almost invisible until you’ve burned enough time and money experiencing it.

What the Validation Lens actually is

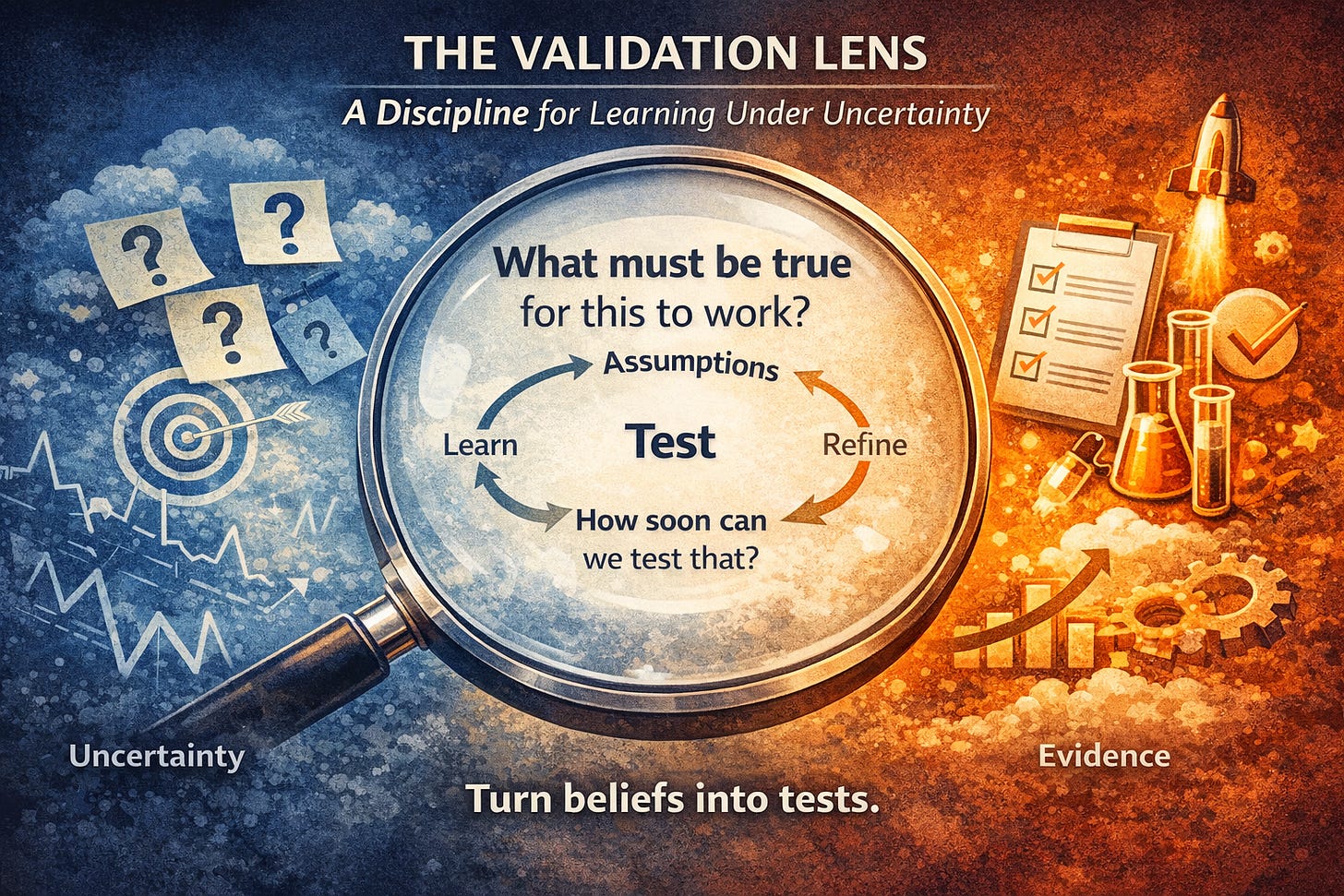

The Validation Lens is a discipline — not a tool, not a ceremony, not a checklist. It’s a habit of mind that you have to deliberately cultivate in the way change work gets done.

Its core question is deceptively simple:

What must be true for this decision to work — and how soon can we test that?

That’s it. Two parts.

The first part — what must be true — forces you to surface assumptions. Not all assumptions. The ones that matter. The ones that, if wrong, would cause the whole thing to unravel. Every decision in a change program rests on a stack of implicit beliefs about how users will respond, how the organization will adapt, how fast teams will learn, what leadership will tolerate. Most of those beliefs are never written down. They live in slide decks and conversations and “everybody knows” wisdom. The Validation Lens asks you to write them down, out loud, and look at them directly.

The second part — how soon can we test that — is where the discipline lives. Because the natural answer to that question, in most organizations, is “after we roll it out.” And that’s precisely the answer you’re trying to avoid.

The Lens pushes you toward the smallest possible test, the earliest possible signal. Not because small is always better, but because the cost of discovering you were wrong drops dramatically when you discover it before you’ve fully committed.

What it looks like in practice

I used to work with a team redesigning how their value stream handled incoming work. They had a model in mind. They were confident. They’d seen it work elsewhere.

I asked them: what has to be true for this to work here?

They listed three things quickly. A fourth took a moment. A fifth came up only when someone said “well, we’re assuming the product owners will actually change how they write acceptance criteria” — and the room went quiet, because everyone knew that was the hardest assumption in the stack.

I asked: how long would it take to test just that one assumption?

They said two weeks.

I suggested we test it before redesigning anything else.

Two weeks later, the product owners had not changed how they wrote acceptance criteria. Not because they were resistant — because nobody had agreed with them yet on what good criteria looked like, and there was a disagreement upstream that hadn’t been surfaced.

That discovery saved them several months of building on a cracked foundation.

That’s the Validation Lens at work. Not dramatic. Not a methodology. Just an honest question asked early enough to matter.

Small cycles. Early signals. Honest assumptions.

There are three operating principles built into the Lens, and they reinforce each other.

Small validation cycles keep the cost of being wrong low. You’re not trying to prove the whole thing works. You’re trying to falsify the most dangerous assumption as cheaply as possible. There’s a direct line here to scientific thinking — you design for disproof, not confirmation. The instinct in most organizations runs the other way.

Early signal detection means you need to know what you’re watching for before you start. Signals aren’t obvious. They often look like noise. Deciding in advance what would constitute a meaningful signal — and what wouldn’t — is part of the discipline. This is harder than it sounds when everyone is invested in good news.

Honest assumption exposure is the most uncomfortable part. Assumptions feel like the ground you’re standing on. Surfacing them feels like questioning the plan, which often feels like disloyalty to the people who made it. The Lens creates a small amount of structured safety for that discomfort. It’s not “we think this is wrong.” It’s “here is what would have to be true for this to be right — let’s be explicit about that.”

Why this is also what keeps AFS honest

There’s something I want to be clear about.

The AFS — the Compass, the Four Questions, the building blocks, all of it — is not a conclusion. It’s a working model. It’s a set of hypotheses about how organizations can build adaptive capacity. I believe in it because I’ve watched it clarify problems that felt intractable and help teams find better paths. But I hold it as a practitioner, not a priest.

The Validation Lens is what prevents AFS from becoming ideology.

If something in this system can’t be validated — if there’s no way to know whether it’s working, no signal that would tell you it’s failing, no test that could disprove it — then it doesn’t belong in the system. It belongs in someone’s philosophy blog.

That’s not a throwaway line. It’s the operating constraint. Every time I introduce a concept in this series, the Lens applies: what must be true for this to be useful, and how would you know if it wasn’t?

If you can’t answer that, you don’t have a tool. You have a belief dressed up as a framework.

Where the Lens sits in the bigger picture

At Level 1, the building blocks are the foundational disciplines that make everything else run. The Four Questions give you a recurring orientation. The Compass maps the tensions you’re navigating. The Validation Lens gives you the epistemics — the discipline of knowing what you actually know, versus what you’re hoping is true.

These three don’t run in sequence. They run simultaneously. You’re using the Compass to understand which direction you’re being pulled. You’re using the Four Questions to assess your current fitness. And you’re using the Validation Lens to make sure your understanding is grounded in something real.

In the next post, we’ll look at the fourth building block — the one that connects sensing to action. But before we get there, the Lens is worth sitting with for a while.

A question to close on

Think about a decision you made in the last year — in a team, in a program, in your organization — that didn’t land quite the way you expected.

Looking back: what assumptions were you making that you never wrote down? When was the earliest point you could have tested the most important one?

I’d be genuinely curious what comes up for you. Drop it in the comments, or reply directly if you’re reading this in your inbox.

A bridge to what comes next

The Validation Lens gives you a way of knowing what you actually know versus what you’ve assumed or hoped.

But knowing isn’t the same as moving.

You can surface every assumption, design elegant early tests, detect signals with perfect clarity — and still have an organization that files the findings and keeps running as before. The insight arrives. The meeting happens. Nothing changes.

That gap is what Building Block 4 addresses.

It’s called the AFS Engine — the loop that turns fitness awareness into adjusted practice. Not a retrospective action item. Actual, observable change in how work gets done.

Without the Lens, the Engine runs on bad fuel. Without the Engine, the Lens produces beautifully accurate reports that nobody acts on. They’re designed as a pair.

We’ll get into how the Engine works in the next post.

Adaptive Ways Publications

Licensed under Creative Commons Attribution 4.0 International (CC BY 4.0)

Share freely with attribution.